whatif {.hero-title}¶

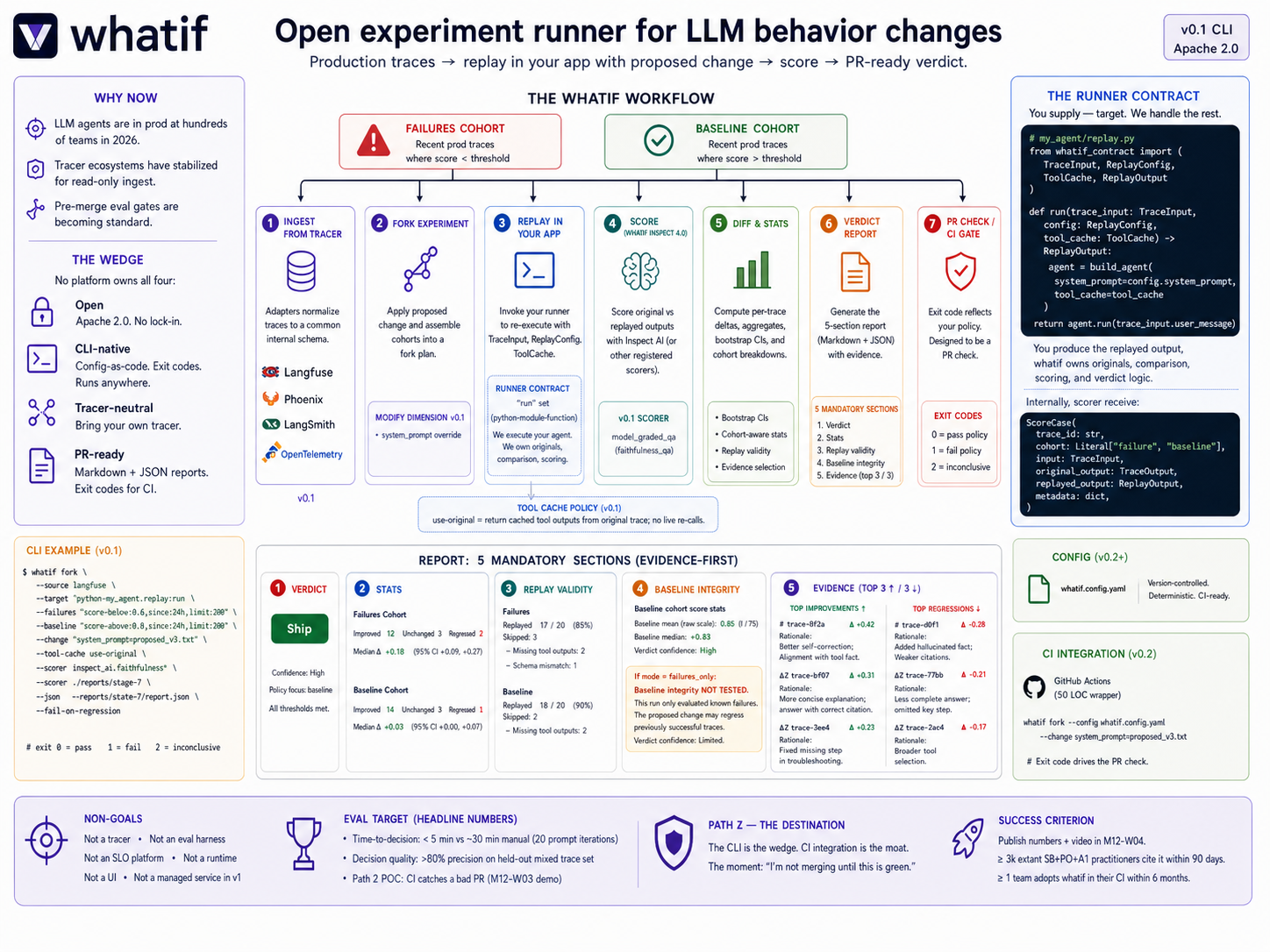

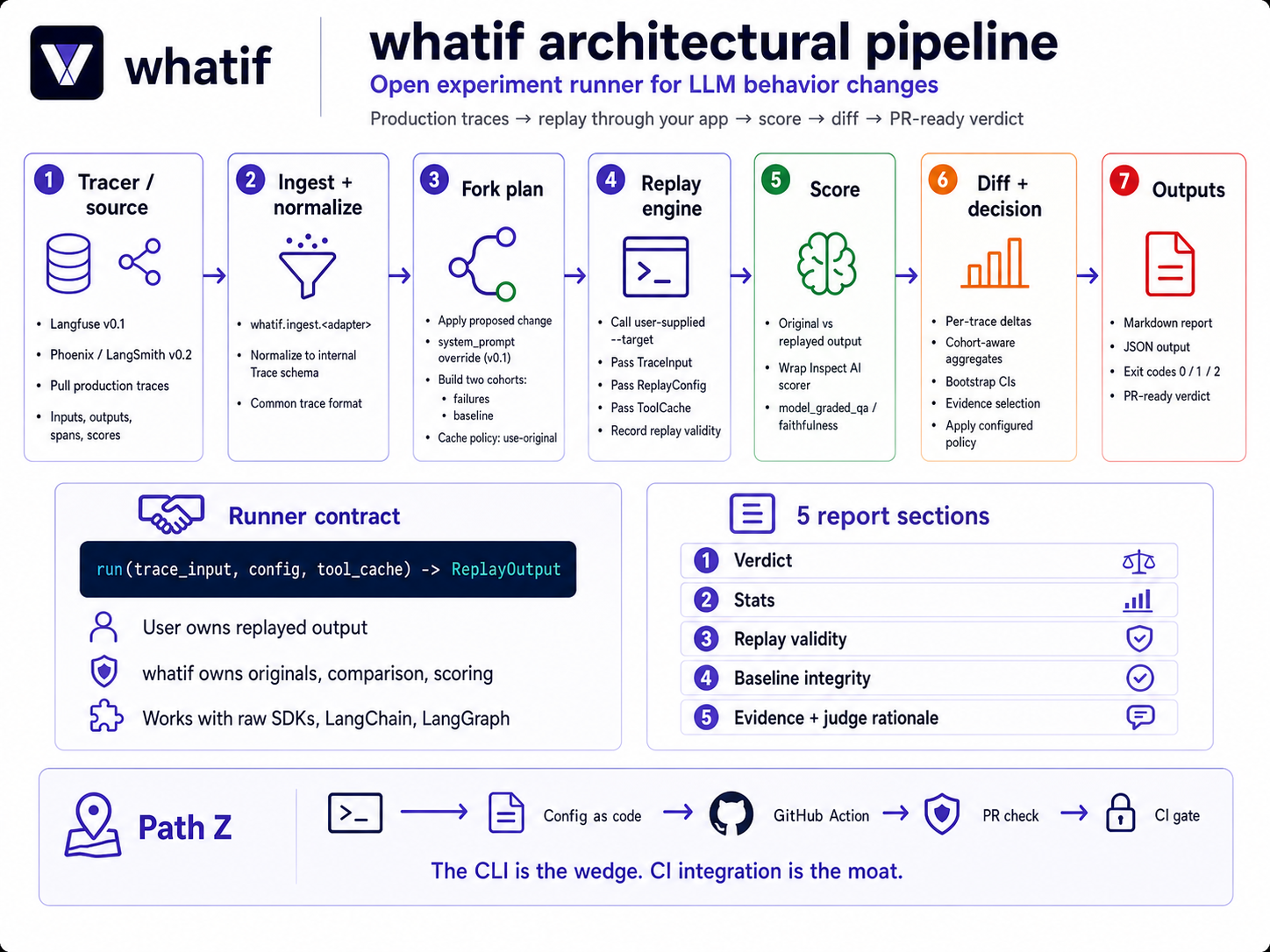

Open experiment runner for LLM behavior changes. Fork production traces, replay with a proposed change, score the diff, emit a PR-ready verdict report.

Why whatif¶

When you change a prompt, model, or tool in an LLM system, you don’t actually know whether it improves behavior — you guess, with a handful of cherry-picked traces and inconsistent evaluation. Every step in the workflow has a tool: Langfuse for traces, Inspect AI for scoring, GitHub for PRs. The experiment doesn’t.

whatif is the experiment runner. Fork production traces (failed cases plus a representative baseline), replay them with your proposed change (original tool outputs cached so side effects don’t re-fire), score with Inspect AI, and produce a diff + verdict report you can attach to the PR.

You stop shipping changes that fix one failure while silently regressing ten others. You go from “this feels better” to “this improved 14 / 20, regressed 3 — here’s exactly where, and here’s the evidence I’d defend in review.”

What you get¶

Fork the actual cases that motivated the fix — not synthetic golden sets that were green yesterday and stale today.

Original tool outputs are cached, so destructive side effects don’t re-fire. Live tool replay is opt-in with per-tool allowlists.

Every experiment runs a failures cohort and a baseline cohort by default. You catch the regression of previously-good traces, not just the rescue of bad ones.

Reports include the verdict, the stats, replay validity, baseline integrity, and concrete representative examples with judge rationale. Numbers without rationale are not trustworthy enough to ship from.

Markdown + JSON outputs, exit codes that reflect your declared decision policy, designed to be a PR check from day 1.

Bring your own tracer. Langfuse first, Phoenix and OpenTelemetry GenAI to follow. The runner contract makes the boundary clean.

Architecture¶

Read the runner contract deep-dive for how whatif decomposes a --target runner contract from the rest of the system.

Status¶

whatif is pre-alpha through M9; v0.1 begins in M10. The destination — the pre-merge regression gate for LLM behavior — is laid out in the Path Z section.

Version |

Target |

What lands |

|---|---|---|

v0.1 |

M10 |

Langfuse ingest, prompt override, cached-tool replay, Inspect AI scorer, evidence-first reports, CI exit codes. |

v0.2 |

M11 |

Config-file mode, deterministic output, second tracer adapter, model swap, GitHub Action wrapper. |

v0.3 |

M12 |

Live-tool replay (opt-in, allowlist), worked CI sample repo. |

v1.0 |

year 2 |

The pre-merge regression gate for LLM behavior. |